GW150914, The Event to its friends, was our first direct observation of gravitational waves. To accompany the detection announcement, the LIGO Scientific & Virgo Collaboration put together a suite of companion papers, each looking at a different aspect of the detection and its implications. Some of the work we wanted to do was not finished at the time of the announcement; in this post I’ll go through the papers we have produced since the announcement.

The papers

I’ve listed the papers below in an order that makes sense to me when considering them together. Each started off as an investigation to check that we really understood the signal and were confident that the inferences made about the source were correct. We had preliminary results for each at the time of the announcement. Since then, the papers have evolved to fill different niches [bonus points note].

13. The Basic Physics Paper

Title: The basic physics of the binary black hole merger GW150914

arXiv: 1608.01940 [gr-qc]

Journal: Annalen der Physik; 529(1–2):1600209(17); 2017

The Event was loud enough to spot by eye after some simple filtering (provided that you knew where to look). You can therefore figure out some things about the source with back-of-the-envelope calculations. In particular, you can convince yourself that the source must be two black holes. This paper explains these calculations at a level suitable for a keen high-school or undergraduate physics student.

More details: The Basic Physics Paper summary

14. The Precession Paper

Title: Improved analysis of GW150914 using a fully spin-precessing waveform model

arXiv: 1606.01210 [gr-qc]

Journal: Physical Review X; 6(4):041014(19); 2016

To properly measure the properties of GW150914’s source, you need to compare the data to predicted gravitational-wave signals. In the Parameter Estimation Paper, we did this using two different waveform models. These models include lots of features binary black hole mergers, but not quite everything. In particular, they don’t include all the effects of precession (the wibbling of the orbit because of the black holes spins). In this paper, we analyse the signal using a model that includes all the precession effects. We find results which are consistent with our initial ones.

More details: The Precession Paper summary

15. The Systematics Paper

Title: Effects of waveform model systematics on the interpretation of GW150914

arXiv: 1611.07531 [gr-qc]

Journal: Classical & Quantum Gravity; 34(10):104002(48); 2017

LIGO science summary: Checking the accuracy of models of gravitational waves for the first measurement of a black hole merger

To check how well our waveform models can measure the properties of the source, we repeat the parameter-estimation analysis on some synthetic signals. These fake signals are calculated using numerical relativity, and so should include all the relevant pieces of physics (even those missing from our models). This paper checks to see if there are any systematic errors in results for a signal like GW150914. It looks like we’re OK, but this won’t always be the case.

More details: The Systematics Paper summary

16. The Numerical Relativity Comparison Paper

Title: Directly comparing GW150914 with numerical solutions of Einstein’s equations for binary black hole coalescence

arXiv: 1606.01262 [gr-qc]

Journal: Physical Review D; 94(6):064035(30); 2016

LIGO science summary: Directly comparing the first observed gravitational waves to supercomputer solutions of Einstein’s theory

Since GW150914 was so short, we can actually compare the data directly to waveforms calculated using numerical relativity. We only have a handful of numerical relativity simulations, but these are enough to give an estimate of the properties of the source. This paper reports the results of this investigation. Unsurprisingly, given all the other checks we’ve done, we find that the results are consistent with our earlier analysis.

If you’re interested in numerical relativity, this paper also gives a nice brief introduction to the field.

More details: The Numerical Relativity Comparison Paper summary

The Basic Physics Paper

Synopsis: Basic Physics Paper

Read this if: You are teaching a class on gravitational waves

Favourite part: This is published in Annalen der Physik, the same journal that Einstein published some of his monumental work on both special and general relativity

It’s fun to play with LIGO data. The Gravitational Wave Open Science Center (GWOSC), has put together a selection of tutorials to show you some of the basics of analysing signals; we also have papers which introduce gravitational wave data analysis. I wouldn’t blame you if you went of to try them now, instead of reading the rest of this post. Even though it would mean that no-one read this sentence. Purple monkey dishwasher.

The GWOSC tutorials show you how to make your own version of some of the famous plots from the detection announcement. This paper explains how to go from these, using the minimum of theory, to some inferences about the signal’s source: most significantly that it must be the merger of two black holes.

GW150914 is a chirp. It sweeps up from low frequency to high. This is what you would expect of a binary system emitting gravitational waves. The gravitational waves carry away energy and angular momentum, causing the binary’s orbit to shrink. This means that the orbital period gets shorter, and the orbital frequency higher. The gravitational wave frequency is twice the orbital frequency (for circular orbits), so this goes up too.

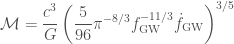

The rate of change of the frequency depends upon the system’s mass. To first approximation, it is determined by the chirp mass,

,

,

where  and

and  are the masses of the two components of the binary. By looking at the signal (go on, try the GWOSC tutorials), we can estimate the gravitational wave frequency

are the masses of the two components of the binary. By looking at the signal (go on, try the GWOSC tutorials), we can estimate the gravitational wave frequency  at different times, and so track how it changes. You can rewrite the equation for the rate of change of the gravitational wave frequency

at different times, and so track how it changes. You can rewrite the equation for the rate of change of the gravitational wave frequency  , to give an expression for the chirp mass

, to give an expression for the chirp mass

.

.

Here  and

and  are the speed of light and the gravitational constant, which usually pop up in general relativity equations. If you use this formula (perhaps fitting for the trend

are the speed of light and the gravitational constant, which usually pop up in general relativity equations. If you use this formula (perhaps fitting for the trend  ) you can get an estimate for the chirp mass. By fiddling with your fit, you’ll see there is some uncertainty, but you should end up with a value around

) you can get an estimate for the chirp mass. By fiddling with your fit, you’ll see there is some uncertainty, but you should end up with a value around  [bonus note].

[bonus note].

Next, let’s look at the peak gravitational wave frequency (where the signal is loudest). This should be when the binary finally merges. The peak is at about  . The orbital frequency is half this, so

. The orbital frequency is half this, so  . The orbital separation

. The orbital separation  is related to the frequency by

is related to the frequency by

![\displaystyle R = \left[\frac{GM}{(2\pi f_\mathrm{orb})^2}\right]^{1/3}](https://s0.wp.com/latex.php?latex=%5Cdisplaystyle+R+%3D+%5Cleft%5B%5Cfrac%7BGM%7D%7B%282%5Cpi+f_%5Cmathrm%7Borb%7D%29%5E2%7D%5Cright%5D%5E%7B1%2F3%7D&bg=ffffff&fg=444444&s=0&c=20201002) ,

,

where  is the binary’s total mass. This formula is only strictly true in Newtonian gravity, and not in full general relativity, but it’s still a reasonable approximation. We can estimate a value for the total mass from our chirp mass; if we assume the two components are about the same mass, then

is the binary’s total mass. This formula is only strictly true in Newtonian gravity, and not in full general relativity, but it’s still a reasonable approximation. We can estimate a value for the total mass from our chirp mass; if we assume the two components are about the same mass, then  . We now want to compare the binary’s separation to the size of black hole with the same mass. A typical size for a black hole is given by the Schwarzschild radius

. We now want to compare the binary’s separation to the size of black hole with the same mass. A typical size for a black hole is given by the Schwarzschild radius

.

.

If we divide the binary separation by the Schwarzschild radius we get the compactness  . A compactness of

. A compactness of  could only happen for black holes. We could maybe get a binary made of two neutron stars to have a compactness of

could only happen for black holes. We could maybe get a binary made of two neutron stars to have a compactness of  , but the system is too heavy to contain two neutron stars (which have a maximum mass of about

, but the system is too heavy to contain two neutron stars (which have a maximum mass of about  ). The system is so compact, it must contain black holes!

). The system is so compact, it must contain black holes!

What I especially like about the compactness is that it is unaffected by cosmological redshifting. The expansion of the Universe will stretch the gravitational wave, such that the frequency gets lower. This impacts our estimates for the true orbital frequency and the masses, but these cancel out in the compactness. There’s no arguing that we have a highly relativistic system.

You might now be wondering what if we don’t assume the binary is equal mass (you’ll find it becomes even more compact), or if we factor in black hole spin, or orbital eccentricity, or that the binary will lose mass as the gravitational waves carry away energy? The paper looks at these and shows that there is some wiggle room, but the signal really constrains you to have black holes. This conclusion is almost as inescapable as a black hole itself.

There are a few things which annoy me about this paper—I think it could have been more polished; “Virgo” is improperly capitalised on the author line, and some of the figures are needlessly shabby. However, I think it is a fantastic idea to put together an introductory paper like this which can be used to show students how you can deduce some properties of GW150914’s source with some simple data analysis. I’m happy to be part of a Collaboration that values communicating our science to all levels of expertise, not just writing papers for specialists!

During my undergraduate degree, there was only a single lecture on gravitational waves [bonus note]. I expect the topic will become more popular now. If you’re putting together such a course and are looking for some simple exercises, this paper might come in handy! Or if you’re a student looking for some project work this might be a good starting reference—bonus points if you put together some better looking graphs for your write-up.

If this paper has whetted your appetite for understanding how different properties of the source system leave an imprint in the gravitational wave signal, I’d recommend looking at the Parameter Estimation Paper for more.

The Precession Paper

Synopsis: Precession Paper

Read this if: You want our most detailed analysis of the spins of GW150914’s black holes

Favourite part: We might have previously over-estimated our systematic error

The Basic Physics Paper explained how you could work out some properties of GW150914’s source with simple calculations. These calculations are rather rough, and lead to estimates with large uncertainties. To do things properly, you need templates for the gravitational wave signal. This is what we did in the Parameter Estimation Paper.

In our original analysis, we used two different waveforms:

- The first we referred to as EOBNR, short for the lengthy technical name SEOBNRv2_ROM_DoubleSpin. In short: This includes the spins of the two black holes, but assumes they are aligned such that there’s no precession. In detail: The waveform is calculated by using effective-one-body dynamics (EOB), an approximation for the binary’s motion calculated by transforming the relevant equations into those for a single object. The S at the start stands for spin: the waveform includes the effects of both black holes having spins which are aligned (or antialigned) with the orbital angular momentum. Since the spins are aligned, there’s no precession. The EOB waveforms are tweaked (or calibrated, if you prefer) by comparing them to numerical relativity (NR) waveforms, in particular to get the merger and ringdown portions of the waveform right. While it is easier to solve the EOB equations than full NR simulations, they still take a while. To speed things up, we use a reduced-order model (ROM), a surrogate model constructed to match the waveforms, so we can go straight from system parameters to the waveform, skipping calculating the dynamics of the binary.

- The second we refer to as IMRPhenom, short for the technical IMRPhenomPv2. In short: This waveform includes the effects of precession using a simple approximation that captures the most important effects. In detail: The IMR stands for inspiral–merger–ringdown, the three phases of the waveform (which are included in in the EOBNR model too). Phenom is short for phenomenological: the waveform model is constructed by tuning some (arbitrary, but cunningly chosen) functions to match waveforms calculated using a mix of EOB, NR and post-Newtonian theory. This is done for black holes with (anti)aligned spins to first produce the IMRPhenomD model. This is then twisted up, to include the dominant effects of precession to make IMRPhenomPv2. This bit is done by combining the two spins together to create a single parameter, which we call

, which determines the amount of precession. Since we are combining the two spins into one number, we lose a bit of the richness of the full dynamics, but we get the main part.

, which determines the amount of precession. Since we are combining the two spins into one number, we lose a bit of the richness of the full dynamics, but we get the main part.

The EOBNR and IMRPhenom models are created by different groups using different methods, so they are useful checks of each other. If there is an error in our waveforms, it would lead to systematic errors in our estimated paramters

In this paper, we use another waveform model, a precessing EOBNR waveform, technically known as SEOBNRv3. This model includes all the effects of precession, not just the simple model of the IMRPhenom model. However, it is also computationally expensive, meaning that the analysis takes a long time (we don’t have a ROM to speed things up, as we do for the other EOBNR waveform)—each waveform takes over 20 times as long to calculate as the IMRPhenom model [bonus note].

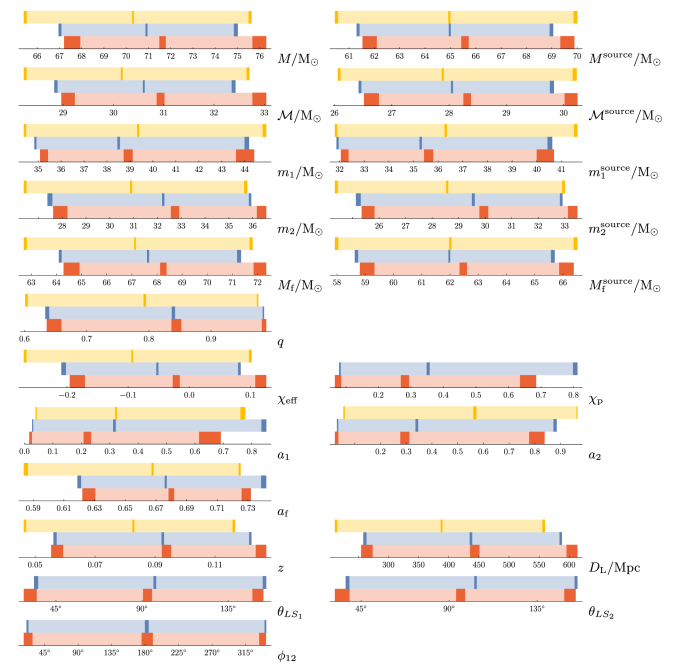

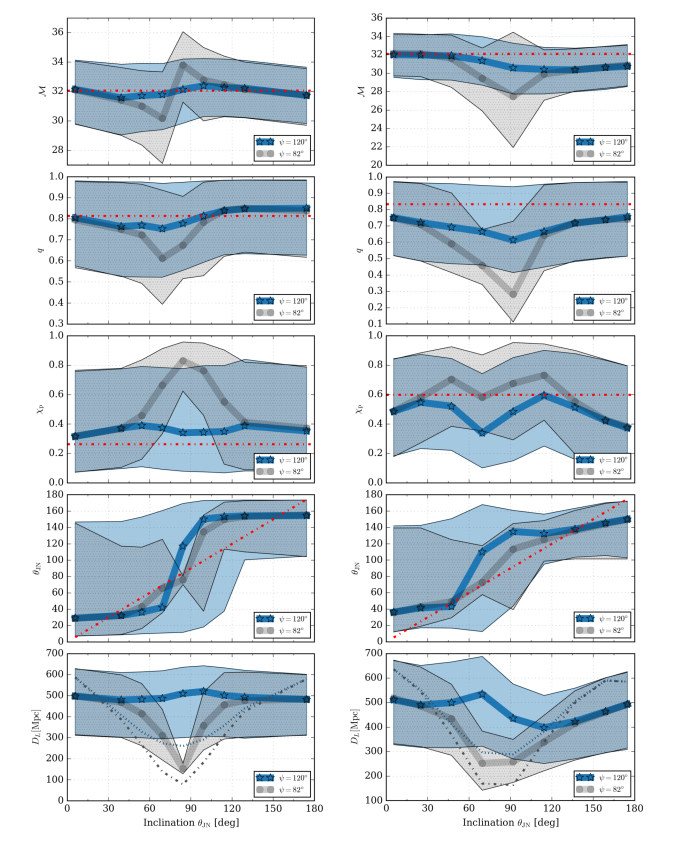

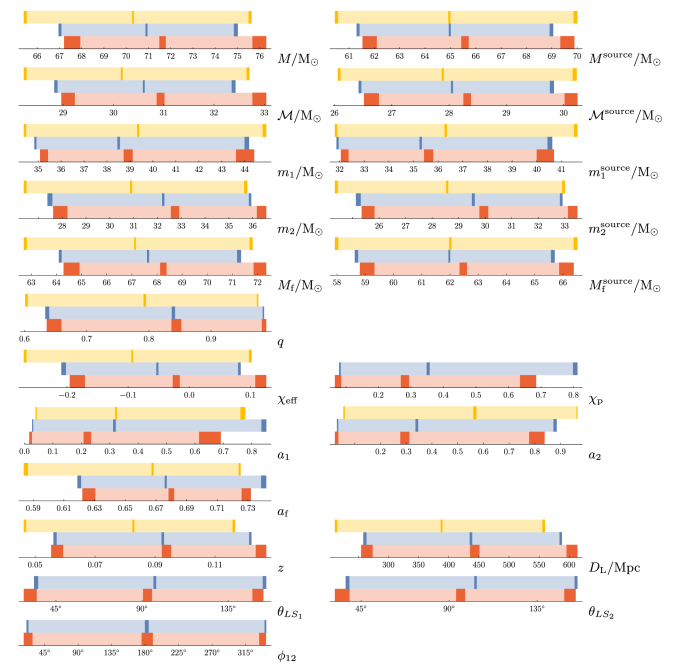

Our results show that all three waveforms give similar results. The precessing EOBNR results are generally more like the IMRPhenom results than the non-precessing EOBNR results are. The plot below compares results from the different waveforms [bonus note].

Comparison of parameter estimates for GW150914 using different waveform models. The bars show the 90% credible intervals, the dark bars show the uncertainty on the 5%, 50% and 95% quantiles from the finite number of posterior samples. The top bar is for the non-precessing EOBNR model, the middle is for the precessing IMRPhenom model, and the bottom is for the fully precessing EOBNR model. Figure 1 of the Precession Paper; see Figure 9 for a comparison of averaged EOBNR and IMRPhenom results, which we have used for our overall results.

We had used the difference between the EOBNR and IMRPhenom results to estimate potential systematic error from waveform modelling. Since the two precessing models are generally in better agreement, we have may have been too pessimistic here.

The main difference in results is that our new refined analysis gives tighter constraints on the spins. From the plot above you can see that the uncertainty for the spin magnitudes of the heavier black hole  , the lighter black hole

, the lighter black hole  and the final black hole (resulting from the coalescence)

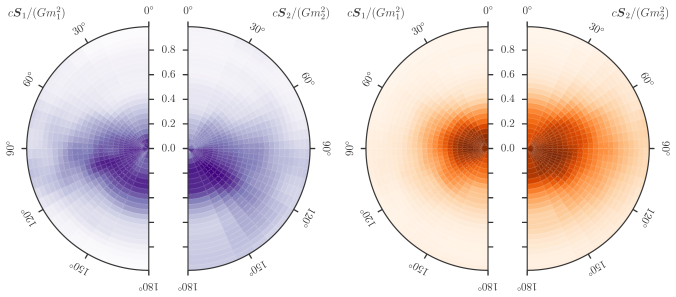

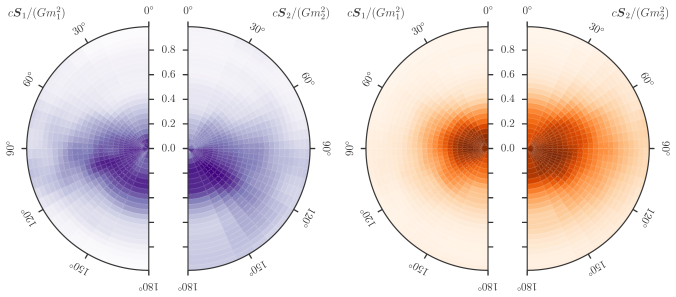

and the final black hole (resulting from the coalescence)  , are slightly narrower. This makes sense, as including the extra imprint from the full effects of precession gives us a bit more information about the spins. The plots below show the constraints on the spins from the two precessing waveforms: the distributions are more condensed with the new results.

, are slightly narrower. This makes sense, as including the extra imprint from the full effects of precession gives us a bit more information about the spins. The plots below show the constraints on the spins from the two precessing waveforms: the distributions are more condensed with the new results.

Comparison of orientations and magnitudes of the two component spins. The spin is perfectly aligned with the orbital angular momentum if the angle is 0. The left disk shows results using the precessing IMRPhenom model, the right using the precessing EOBNR model. In each, the distribution for the more massive black hole is on the left, and for the smaller black hole on the right. Adapted from Figure 5 of the Parameter Estimation Paper and Figure 4 of the Precession Paper.

In conclusion, this analysis had shown that included the full effects of precession do give slightly better estimates of the black hole spins. However, it is safe to trust the IMRPhenom results.

If you are looking for the best parameter estimates for GW150914, these results are better than the original results in the Parameter Estimation Paper. However, the O2 Catalogue Paper includes results using improved calibration and noise power spectral density estimation, as well as using precessing waveforms!

The Systematics Paper

Synopsis: Systematics Paper

Read this if: You want to know how parameter estimation could fare for future detections

Favourite part: There’s no need to panic yet

The Precession Paper highlighted how important it is to have good waveform templates. If there is an error in our templates, either because of modelling or because we are missing some physics, then our estimated parameters could be wrong—we would have a source of systematic error.

We know our waveform models aren’t perfect, so there must be some systematic error, the question is how much? From our analysis so far (such as the good agreement between different waveforms in the Precession Paper), we think that systematic error is less significant than the statistical uncertainty which is a consequence of noise in the detectors. In this paper, we try to quantify systematic error for GW150914-like systems.

To asses systematic errors, we analyse waveforms calculated by numerical relativity simulations into data around the time of GW150914. Numerical relativity exactly solves Einstein’s field equations (which govern general relativity), so results of these simulations give the most accurate predictions for the form of gravitational waves. As we know the true parameters for the injected waveforms, we can compare these to the results of our parameter estimation analysis to check for biases.

We use waveforms computed by two different codes: the Spectral Einstein Code (SpEC) and the Bifunctional Adaptive Mesh (BAM) code. (Don’t the names make them sound like such fun?) Most waveforms are injected into noise-free data, so that we know that any offset in estimated parameters is dues to the waveforms and not detector noise; however, we also tried a few injections into real data from around the time of GW150914. The signals are analysed using our standard set-up as used in the Parameter Estimation Paper (a couple of injections are also included in the Precession Paper, where they are analysed with the fully precessing EOBNR waveform to illustrate its accuracy).

The results show that in most cases, systematic errors from our waveform models are small. However, systematic errors can be significant for some orientations of precessing binaries. If we are looking at the orbital plane edge on, then there can be errors in the distance, the mass ratio and the spins, as illustrated below [bonus note]. Thankfully, edge-on binaries are quieter than face-on binaries, and so should make up only a small fraction of detected sources (GW150914 is most probably face off). Furthermore, biases are only significant for some polarization angles (an angle which describes the orientation of the detectors relative to the stretch/squash of the gravitational wave polarizations). Factoring this in, a rough estimate is that about 0.3% of detected signals would fall into the unlucky region where waveform biases are important.

While it seems that we don’t have to worry about waveform error for GW150914, this doesn’t mean we can relax. Other systems may show up different aspects of waveform models. For example, our approximants only include the dominant modes (spherical harmonic decompositions of the gravitational waves). Higher-order modes have more of an impact in systems where the two black holes are unequal masses, or where the binary has a higher total mass, so that the merger and ringdown parts of the waveform are more important. We need to continue work on developing improved waveform models (or at least, including our uncertainty about them in our analysis), and remember to check for biases in our results!

The Numerical Relativity Comparison Paper

Synopsis: Numerical Relativity Comparison Paper

Read this if: You are really suspicious of our waveform models, or really like long tables or numerical data

Favourite part: We might one day have enough numerical relativity waveforms to do full parameter estimation with them

In the Precession Paper we discussed how important it was to have accurate waveforms; in the Systematics Paper we analysed numerical relativity waveforms to check the accuracy of our results. Since we do have numerical relativity waveforms, you might be wondering why we don’t just use these in our analysis? In this paper, we give it a go.

Our standard parameter-estimation code (LALInference) randomly hops around parameter space, for each set of parameters we generate a new waveform and see how this matches the data. This is an efficient way of exploring the parameter space. Numerical relativity waveforms are too computationally expensive to generate one each time we hop. We need a different approach.

The alternative, is to use existing waveforms, and see how each of them match. Each simulation gives the gravitational waves for a particular mass ratio and combination of spins, we can scale the waves to examine different total masses, and it is easy to consider what the waves would look like if measured at a different position (distance, inclination or sky location). Therefore, we can actually cover a fair range of possible parameters with a given set of simulations.

To keep things quick, the code averages over positions, this means we don’t currently get an estimate on the redshift, and so all the masses are given as measured in the detector frame and not as the intrinsic masses of the source.

The number of numerical relativity simulations is still quite sparse, so to get nice credible regions, a simple Gaussian fit is used for the likelihood. I’m not convinced that this capture all the detail of the true likelihood, but it should suffice for a broad estimate of the width of the distributions.

The results of this analysis generally agree with those from our standard analysis. This is a relief, but not surprising given all the other checks that we have done! It hints that we might be able to get slightly better measurements of the spins and mass ratios if we used more accurate waveforms in our standard analysis, but the overall conclusions are sound.

I’ve been asked if since these results use numerical relativity waveforms, they are the best to use? My answer is no. As well as potential error from the sparse sampling of simulations, there are several small things to be wary of.

- We only have short numerical relativity waveforms. This means that the analysis only goes down to a frequency of

and ignores earlier cycles. The standard analysis includes data down to

and ignores earlier cycles. The standard analysis includes data down to  , and this extra data does give you a little information about precession. (The limit of the simulation length also means you shouldn’t expect this type of analysis for the longer LVT151012 or GW151226 any time soon).

, and this extra data does give you a little information about precession. (The limit of the simulation length also means you shouldn’t expect this type of analysis for the longer LVT151012 or GW151226 any time soon).

- This analysis doesn’t include the effects of calibration uncertainty. There is some uncertainty in how to convert from the measured signal at the detectors’ output to the physical strain of the gravitational wave. Our standard analysis fold this in, but that isn’t done here. The estimates of the spin can be affected by miscalibration. (This paper also uses the earlier calibration, rather than the improved calibration of the O1 Binary Black Hole Paper).

- Despite numerical relativity simulations producing waveforms which include all higher modes, not all of them are actually used in the analysis. More are included than in the standard analysis, so this will probably make negligible difference.

Finally, I wanted to mention one more detail, as I think it is not widely appreciated. The gravitational wave likelihood is given by an inner product

![\displaystyle L \propto \exp \left[- \int_{-\infty}^{\infty} \mathrm{d}f \frac{|s(f) - h(f)|^2}{S_n(f)} \right]](https://s0.wp.com/latex.php?latex=%5Cdisplaystyle+L+%5Cpropto+%5Cexp+%5Cleft%5B-+%5Cint_%7B-%5Cinfty%7D%5E%7B%5Cinfty%7D+%C2%A0%5Cmathrm%7Bd%7Df+%C2%A0%5Cfrac%7B%7Cs%28f%29+-+h%28f%29%7C%5E2%7D%7BS_n%28f%29%7D+%C2%A0%5Cright%5D&bg=ffffff&fg=444444&s=0&c=20201002) ,

,

where  is the signal,

is the signal,  is our waveform template and

is our waveform template and  is the noise spectral density (PSD). These are the three things we need to know to get the right answer. This paper, together with the Precession Paper and the Systematics Paper, has been looking at error from our waveform models

is the noise spectral density (PSD). These are the three things we need to know to get the right answer. This paper, together with the Precession Paper and the Systematics Paper, has been looking at error from our waveform models  . Uncertainty from the calibration of

. Uncertainty from the calibration of  is included in the standard analysis, so we know how to factor this in (and people are currently working on more sophisticated models for calibration error). This leaves the noise PSD

is included in the standard analysis, so we know how to factor this in (and people are currently working on more sophisticated models for calibration error). This leaves the noise PSD  …

…

The noise PSD varies all the time, so it needs to be estimated from the data. If you use a different stretch of data, you’ll get a different estimate, and this will impact your results. Ideally, you would want to estimate from the time span that includes the signal itself, but that’s tricky as there’s a signal in the way. The analysis in this paper calculates the noise power spectral density using a different time span and a different method than our standard analysis; therefore, we expect some small difference in the estimated parameters. This might be comparable to (or even bigger than) the difference from switching waveforms! We see from the similarity of results that this cannot be a big effect, but it means that you shouldn’t obsess over small differences, thinking that they could be due to waveform differences, when they could just come from estimation of the noise PSD.

Lots of work is currently going into making sure that the numerator term  is accurate. I think that the denominator

is accurate. I think that the denominator  needs attention too. Since we have been kept rather busy, including uncertainty in PSD estimation will have to wait for a future set papers.

needs attention too. Since we have been kept rather busy, including uncertainty in PSD estimation will have to wait for a future set papers.

Bonus notes

Finches

100 bonus points to anyone who folds up the papers to make beaks suitable for eating different foods.

The right answer

Our current best estimate for the chirp mass (from the O2 Catalogue Paper) would be  . You need proper templates for the gravitational wave signal to calculate this. If you factor in the the gravitational wave gets redshifted (shifted to lower frequency by the expansion of the Universe), then the true chirp mass of the source system is

. You need proper templates for the gravitational wave signal to calculate this. If you factor in the the gravitational wave gets redshifted (shifted to lower frequency by the expansion of the Universe), then the true chirp mass of the source system is  .

.

Formative experiences

My one undergraduate lecture on gravitational waves was the penultimate lecture of the fourth-year general relativity course. I missed this lecture, as I had a PhD interview (at the University of Birmingham). Perhaps if I had sat through it, my research career would have been different?

Good things come…

The computational expense of a waveform is important, as when we are doing parameter estimation, we calculate lots (tens of millions) of waveforms for different parameters to see how they match the data. Before O1, the task of using SEOBNRv3 for parameter estimation seemed quixotic. The first detection, however, was enticing enough to give it a try. It was a truly heroic effort by Vivien Raymond and team that produced these results—I am slightly suspicious the Vivien might actually be a wizard.

GW150914 is a short signal, meaning it is relatively quick to analyse. Still, it required us using all the tricks at our disposal to get results in a reasonable time. When it came time to submit final results for the Discovery Paper, we had just about 1,000 samples from the posterior probability distribution for the precessing EOBNR waveform. For comparison, we had over 45,000 sample for the non-precessing EOBNR waveform. 1,000 samples isn’t enough to accurately map out the probability distributions, so we decided to wait and collect more samples. The preliminary results showed that things looked similar, so there wouldn’t be a big difference in the science we could do. For the Precession Paper, we finally collected 2,700 samples. This is still a relatively small number, so we carefully checked the uncertainty in our results due to the finite number of samples.

The Precession Paper has shown that it is possible to use the precessing EOBNR for parameter estimation, but don’t expect it to become the norm, at least until we have a faster implementation of it. Vivien is only human, and I’m sure his family would like to see him occasionally.

Parameter key

In case you are wondering what all the symbols in the results plots stand for, here are their usual definitions. First up, the various masses

—the mass of the heavier black hole, sometimes called the primary black hole;

—the mass of the heavier black hole, sometimes called the primary black hole; —the mass of the lighter black hole, sometimes called the secondary black hole;

—the mass of the lighter black hole, sometimes called the secondary black hole; —the total mass of the binary,

—the total mass of the binary,  ;

; —the mass of the final black hole (after merger);

—the mass of the final black hole (after merger); —the chirp mass, the combination of the two component masses which sets how the binary inspirals together;

—the chirp mass, the combination of the two component masses which sets how the binary inspirals together; —the mass ratio,

—the mass ratio,  . Confusingly, numerical relativists often use the opposite convention

. Confusingly, numerical relativists often use the opposite convention  (which is why the Numerical Relativity Comparison Paper discusses results in terms of

(which is why the Numerical Relativity Comparison Paper discusses results in terms of  : we can keep the standard definition, but all the numbers are numerical relativist friendly).

: we can keep the standard definition, but all the numbers are numerical relativist friendly).

A superscript “source” is sometimes used to distinguish the actual physical masses of the source from those measured by the detector which have been affected by cosmological redshift. The measured detector-frame mass is  , where

, where  is the true, redshift-corrected source-frame mass and

is the true, redshift-corrected source-frame mass and  is the redshift. The mass ratio

is the redshift. The mass ratio  is independent of the redshift. On the topic of redshift, we have

is independent of the redshift. On the topic of redshift, we have

—the cosmological redshift (

—the cosmological redshift ( would be now);

would be now); —the luminosity distance.

—the luminosity distance.

The luminosity distance sets the amplitude of the signal, as does the orientation which we often describe using

—the inclination, the angle between the line of sight and the orbital angular momentum (

—the inclination, the angle between the line of sight and the orbital angular momentum ( ). This is zero for a face-on binary.

). This is zero for a face-on binary. —the angle between the line of sight (

—the angle between the line of sight ( ) and the total angular momentum of the binary (

) and the total angular momentum of the binary ( ); this is approximately equal to the inclination, but is easier to use for precessing binaries.

); this is approximately equal to the inclination, but is easier to use for precessing binaries.

As well as masses, black holes have spins

—the (dimensionless) spin magnitude of the heavier black hole, which is between

—the (dimensionless) spin magnitude of the heavier black hole, which is between  (no spin) and

(no spin) and  (maximum spin);

(maximum spin); —the (dimensionless) spin magnitude of the lighter black hole;

—the (dimensionless) spin magnitude of the lighter black hole; —the (dimensionless) spin magnitude of the final black hole;

—the (dimensionless) spin magnitude of the final black hole; —the effective inspiral spin parameter, a combinations of the two component spins which has the largest impact on the rate of inspiral (think of it as the spin equivalent of the chirp mass);

—the effective inspiral spin parameter, a combinations of the two component spins which has the largest impact on the rate of inspiral (think of it as the spin equivalent of the chirp mass); —the effective precession spin parameter, a combination of spins which indicate the dominant effects of precession, it’s

—the effective precession spin parameter, a combination of spins which indicate the dominant effects of precession, it’s  for no precession and

for no precession and  for maximal precession;

for maximal precession; —the primary tilt angle, the angle between the orbital angular momentum and the heavier black holes spin (

—the primary tilt angle, the angle between the orbital angular momentum and the heavier black holes spin ( ). This is zero for aligned spin.

). This is zero for aligned spin. —the secondary tilt angle, the angle between the orbital angular momentum and the lighter black holes spin (

—the secondary tilt angle, the angle between the orbital angular momentum and the lighter black holes spin ( ).

). —the angle between the projections of the two spins on the orbital plane.

—the angle between the projections of the two spins on the orbital plane.

The orientation angles change in precessing binaries (when the spins are not perfectly aligned or antialigned with the orbital angular momentum), so we quote values at a reference time corresponding to when the gravitational wave frequency is  . Finally (for the plots shown here)

. Finally (for the plots shown here)

—the polarization angle, this is zero when the detector arms are parallel to the

—the polarization angle, this is zero when the detector arms are parallel to the  polarization’s stretch/squash axis.

polarization’s stretch/squash axis.

For more detailed definitions, check out the Parameter Estimation Paper or the LALInference Paper.

as calculated by COMPAS. We show the rate in 30 different chirp mass bins for our default population parameters. The caption gives the total rate for all masses. Figure 2 of Barrett et al. (2018)

,

is the likelihood for data

(the number of observations and their chirp mass distribution in our case),

are our parameters (natal kick, etc.), and the angular brackets indicate the average over the population parameters. In statistics terminology, this is the variance of the score, which I think sounds cool. The Fisher information matrix nicely quantifies how much information we can lean about the parameters, including the correlations between them (so we can explore degeneracies). The inverse of the Fisher information matrix gives a lower bound on the covariance matrix (the multidemensional generalisation of the variance in a normal distribution) for the parameters

. In the limit of a large number of detections, we can use the Fisher information matrix to estimate the accuracy to which we measure the parameters [bonus note].

, the common envelope efficiency

, the Wolf–Rayet mass loss rate

, and the luminous blue variable mass loss rate

. There is an anticorrealtion between

and

, and hints at a similar anticorrelation between

and

. We show 1500 different realisations of the binary population to give an idea of scatter. Figure 6 of Barrett et al. (2018)

as following a Maxwell–Boltzmann distribution,

,

is the unknown population parameter. The natal kick received by the black hole

is not the same as this, however, as we assume some of the material ejected by the supernova falls back, reducing the over kick. The final natal kick is

,

is the fraction that falls back, taken from Fryer et al. (2012). The fraction is greater for larger black holes, so the biggest black holes get no kicks. This means that the largest black holes are unaffected by the value of

.

and the probability of falling in each chirp mass bin

(we factor measurement uncertainty into this). Our observations are the the total number of detections

and the number in each chirp mass bin

(

). The likelihood is the probability of these observations given the model predictions. We can split the likelihood into two pieces, one for the rate, and one for the chirp mass distribution,

.

given the predicted rate

. This is given by a Poisson distribution,

,

is the total observing time. For the chirp mass likelihood, we the probability of getting a number of detections in each bin, given the predicted fractions. This is given by a multinomial distribution,

.

.

. Therefore, we can see that the measurement uncertainty defined by the inverse of the Fisher information matrix, scales on average as

.

and

are the same as their expectation values. The second-order derivatives are given by the expression we have worked out for the Fisher information matrix. Therefore, in the region around the maximum likelihood point, the Fisher information matrix encodes all the relevant information about the shape of the likelihood.

. As we get more detections, statistical uncertainties should reduce, with the peak of the distribution homing in on the maximum likelihood value, and its width narrowing. If you take the limit of

, you’ll see that the distribution basically becomes a delta function at the maximum likelihood values. To check that our

was large enough, we verified that higher-order derivatives were still small.