GW190814 is an exception discovery from the third observing run (O3) of the LIGO and Virgo gravitational wave detectors. The signal came from the coalescence of a binary made up of a component about 23 times the mass of our Sun (solar masses) and one about 2.6 solar masses. The more massive component would be a black hole, similar to past discoveries. The less massive component, however, we’re not sure about. This is a mass range where observations have been lacking. It could be a neutron star. In this case, GW190814 would be the first time we have seen a neutron star–black hole binary. This could also be the most massive neutron star ever found, certainly the most massive in a compact-object (black hole or neutron star) binary. Alternatively, it could be a black hole, in which case it would be the smallest black hole ever found. We have discovered something special, we’re just not sure exactly what…

The population of compact objects (black holes and neutron stars) observed with gravitational waves and with electromagnetic astronomy, including a few which are uncertain. GW190814 is highlighted. It is not clear if its lighter component is a black hole or neutron star. Source: Northwestern

Detection

14 August 2019 marked the second birthday of GW170814—the first gravitational wave we clearly detected using all three of our detectors. As a present, we got an even more exciting detection.

I was at the MESA Summer School at the time [bonus advertisement], learning how to model stars. My student Chase come over excitedly as soon as he saw the alert. We snuck a look at the data in a private corner of the class. GW190814 (then simply known as candidate S190814bv) was a beautifully clear chirp. You shouldn’t assess how plausible a candidate signal is by eye (that’s why we spent years building detection algorithms [bonus note]), but GW190814 was a clear slam dunk that hit it out of the park straight into the bullseye. Check mate!

Time–frequency plots for GW190814 as measured by LIGO Hanford, LIGO Livingston and Virgo. The chirp of a binary coalescence is clearest in Livingston. For long signals, like GW190814, it is usually hard to pick out the chirp by eye. Figure 1 of the GW190814 Discovery Paper.

Unlike GW170814, however, it seemed that we only had two detectors observing. LIGO Hanford was undergoing maintenance (the same procedure as when GW170608 occurred). However, after some quick checks, it was established that the Hanford data was actually good to use—the detectors had been left alone in the 5 minutes around the signal (phew), so the data were clean (wooh)! We had another three-detector detection.

The big difference that having three detectors make is a much better localization of the source. For GW190814 we get a beautifully tight localization. This was exciting, as GW190814 could be a neutron star–black hole. The initial source classification (which is always pretty uncertain as it’s done before we have detailed analysis) went back and forth between being a binary black hole with one component in the the 3–5 solar mass range, and a neutron star–black hole (which means the less massive component is below 3 solar masses, not necessarily a neutron star). Neutron star–black hole mergers may potentially have an electromagnetic counterparts which can be found by telescopes. Not all neutron star–black hole binaries will have counterparts as sometimes, when the black hole is much bigger than the neutron star, it will be swallowed whole. Even if there is a counterpart, it may be too faint to see (we expect this to be increasingly common as our detectors detect gravitational waves from more distance sources). GW190814’s source is about 240 Mpc away (six times the distance of GW170817, meaning any light emitted would be about 36 times fainter) [bonus note]. Many teams searched for counterparts, but none have been reported. Despite the excellent localization, we have no multimessenger counterpart this time.

Sky localizations for GW190814’s source. The blue dashed contour shows the preliminary localization using only LIGO Livingston and Virgo data, and the solid orange shows the preliminary localization adding in Hanford data. The dashed green contour shows and updated localization used by many for their follow-up studies. The solid purple contour shows our final result, which has an area of just . All contours are for 90% probabilities. Figure 2 of the GW190814 Discovery Paper.

The sky localisation for GW190814 demonstrates nicely how localization works for gravitational-wave sources. We get most of our information from the delay time between the signal reaching the different detectors. With a two-detector network, a single time delay corresponds to a ring on the sky. We kind of see this with the blue dashed localization above, which was the initial result using just LIGO Livingston and Virgo data. There are actual arcs corresponding to two different time delays. This is because the signal is quiet in Virgo, and so we don’t get an absolute lock on the arrival time: if you shift the signal so it’s one cycle different, it still matches pretty well, so we get two possibilities. The arcs aren’t full circles because information on the phase of the signals, and the relative amplitudes (since detectors are not uniformal sensitive in all directions) add extra information. Adding in LIGO Hanford data gives us more information on the timing. The Hanford–Livingston circle of constant time delay slices through the Livingston–Virgo one, leaving us with just the two overlapping islands as possibilities. The sky localizations shifted a little bit as we refined the analysis, but remained pretty consistent.

Whodunnit?

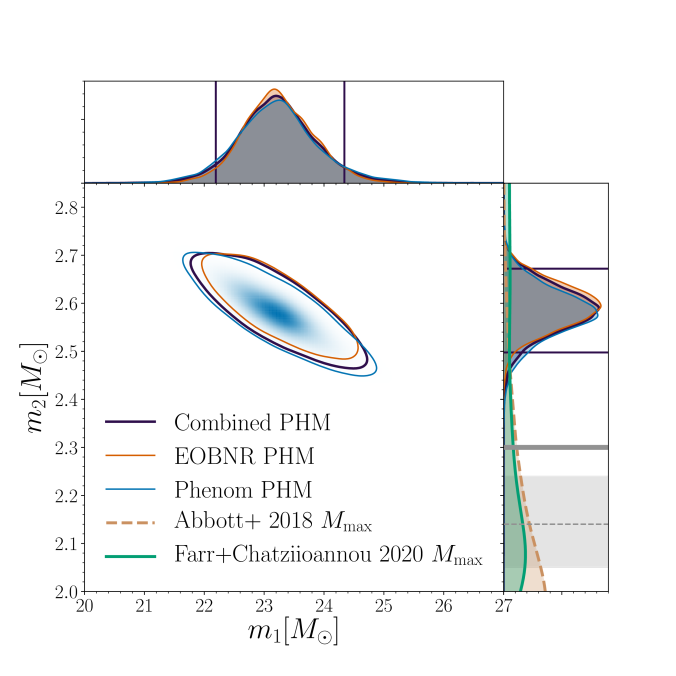

From the gravitational wave signal we inferred that GW190814 came from a binary with masses solar masses (quoting the 90% range for parameters), and the other

solar masses. This is remarkable for two reasons: first, the lower mass object is right in the range where we might hit the maximum mass of a neutron star, and second, this is the most asymmetric masses from any of our gravitational wave sources.

Estimated masses for the two components in the binary . We show results several different waveform models (which include spin precession and higher order multiple moments). The two-dimensional shows the 90% probability contour. The one-dimensional plot shows individual masses; the dotted lines mark 90% bounds away from equal mass. Estimates for the maximum neutron star mass are shown for comparison with the mass of the lighter component

. Figure 3 of the GW190814 Discovery Paper.

Neutron star or black hole?

Neutron stars are massive balls of stuff™. They are made of matter in its most squished form. A neutron star about 1.4 solar masses would have a radius of only about 12 kilometres. For comparison, that’s roughly the same as trying to fit the mass of M&Ms (plain; for peanut butter it would be different, and of course, more delicious) into the volume of just

M&Ms (ignoring the fact that you can’t perfectly pack them)! Neutron stars are about

times more dense than M&Ms. As you make neutron stars heavier, their gravity gets stronger until at some point the strange stuff™ they are made of can’t take the pressure. At this point the neutron star will collapse down to a black hole. Since we don’t know the properties of neutron star stuff™ we don’t know the maximum mass of a neutron star.

We have observed neutron stars of a range of masses. The recently discovered pulsar J0740+6620 may be around 2.1 solar masses, and potentially pulsar J1748−2021B may be around 2.7 solar masses (although that measurement is more uncertain as it requires some strong assumptions about the pulsar’s orbit and its companion star). Using observations of GW170817, estimates have been made that the maximum neutron star mass should be below 2.2 or 2.3 solar masses; using late-time observations of short gamma-ray bursts (assuming that they all come from binary neutron star mergers) indicates an upper limit of 2.4 solar masses, and looking at the observed population of neutron stars, it could be anywhere between 2 and 3 solar masses. About 3 solar masses is a safe upper limit, as it’s not possible to make stuff™ stiff enough to withstand more pressure than that.

At about 2.6 solar masses, it’s not too much of a stretch to believe that the less massive component is a neutron star. In this case, we have learnt something valuable about the properties of neutron star stuff™. Assuming that we have a neutron star, we can infer the properties of neutron star stuff™. We find that a typical neutron star 1.4 solar masses, the radius would be and the tidal deformability

.

The plot below shows our results fitting the neutron star equation of state, which describes how the density pf neutron star stuff™ changes with pressure. The dashed lines show the 90% range of our prior (what the analysis would return with no input information). The blue curve shows results adding in GW170817 (what we would have if GW190814 was a binary black hole), we prefer neutron stars made of softer stuff™ (which is squisher to hug, and would generally result in more compact neutron stars). Adding in GW190814 (assuming a neutron star–black hole) pushes us back up to stiffer stuff™ as we now need to support a massive maximum mass.

Constraints on the neutron star equation of state, showing how density changes with pressure $p$. The blue curve just uses GW170817, implicitly assuming that GW190814 is from a binary black hole, while the orange shows what happens if we include GW190814, assuming it is from a neutron star–black hole binary. The 90% and 50% credible contours are shown as the dark and lighter bands, and the dashed lines indicate the 90% region of the prior. Figure 8 of the GW190814 Discovery Paper.

What if it’s not a neutron star?

In this case we must have a black hole. In theory black holes can be any mass: you just need to squish enough mass into a small enough space. However, from our observations of X-ray binaries, there seem to be no black holes below about 5 solar masses. This is referred to as the lower mass gap, or the core collapse mass gap. The theory was that when the cores of massive stars collapse, there are different types of explosions and implosions depending upon the core’s mass. When you have a black hole, more material from outside the core falls back than when you have a neutron star. All the extra material would always mean that black holes are born above 5 solar masses. If we’ve found a black hole below this, either this theory is wrong and we need a new explanation for the lack of X-ray observations, or we have a black hole formed via a different means.

Potentially, we could if we measured the effects of the tidal distortion of the neutron star in the gravitational wave signal. Unfortunately, tidal effects are weaker for more unequal mass binaries. GW190814 is extremely unequal, so we can’t measure anything and say either way. Equally, seeing an electromagnetic counterpart would be evidence for a neutron star, but with such unequal masses the neutron star would likely be eaten whole, like me eating an M&M. The mass ratio means that we can’t be certain what we have.

The calculation we can do, is use past observations of neutron stars and measurements of the stiffness of neutron star stuff™ to estimate the probability the the mass of the less massive component is below the maximum neutron star mass. Using measurements from GW170817 for the stuff™ stiffness, we estimate that there’s only a 3% probability of the mass being below the maximum neutron star mass, and using the observed population of neutron stars the probability is 29%. It seems that it is improbable, but not impossible, that the component is a neutron star.

I’m yet to be convinced one way or the other on black hole vs neutron star [bonus note], but I do like the idea of extra small black holes. They would be especially cute, although you must never try to hug them.

The unequal masses

Most of the binaries we’ve seen with gravitational waves so far are consistent with having equal masses. The exception is GW190412, which has a mass ratio of . The mass ratio changes a few things about the gravitational wave signal. When you have unequal masses, it is possible to observe higher harmonics in the gravitational wave signal: chirps at multiples of the orbital frequency (the dominant two form a perfect fifth). We observed higher harmonics for the first time with GW190412. GW190814 has a more extreme mass ratio

. We again spot the next harmonic in GW190814, this time it is even more clear. Modelling gravitational waves from systems with mass ratios of

is tricky, it is important to include the higher order multipole moments in order to get good estimates of the source parameters.

Having unequal masses makes some of the properties of the lighter component, like its tidal deformability of its spin, harder to measure. Potentially, it can be easier to pick out the spin of the more massive component. In the case of GW190814, we find that the spin is small, . This is our best ever measurement of black hole spin!

Estimated orientation and magnitude of the two component spins. The distribution for the more massive component is on the left, and for the lighter component on the right. The probability is binned into areas which have uniform prior probabilities, so if we had learnt nothing, the plot would be uniform. The maximum spin magnitude of 1 is appropriate for black holes. On account of the mass ratio, we get a good measurement of the spin of the more massive component, but not the lighter one. Figure 6 of the GW190814 Discovery Paper.

Typically, it is easier to measure the amount of spin aligned with the orbital angular momentum. We often characterise this as the effective inspiral spin parameter. In this case, we measure . Harder to measure is the spin in the orbital plane. This controls the amount of spin precession (wobbling in the spin orientation as the orbital angular momentum is not aligned with the total angular momentum), and is characterised by the effective precession spin parameter. For GW190814, we find

, which is our tightest measurement. It might seem odd that we get our best measurement of in-plane spin in the case when there is no precession. However, this is because if there were precession, we would clearly measure it. Since there is no support for precession in the data, we know that it isn’t there, and hence that the amount of in-plane spin is small.

Implications

While we haven’t solved the mystery of neutron star vs black hole, what can we deduce?

- Einstein is still not wrong yet. Our tests of general relativity didn’t give us any evidence that something was wrong. We even tried a new test looking for deviations in the spin-induced quadrupole moment. GW190814 was initially thought to be a good case to try this, on account of its mass ratio, unfortunately, since there’s little hint of spin, we don’t get particularly informative results. Next time.

- The Universe is expanded about as fast as we’d expect. We have a wonderfully tight localization: GW190814 has the best localization of all our gravitational waves except for GW170817. This means we can cross-reference with galaxy catalogues to estimate the Hubble constant, a measure of the expansion rate of the Universe. We get the distance from our gravitational wave measurement, and the redshift from the catalogue, and putting them together give the Hubble constant

. From GW190814 alone, we get

(quoting numbers with our usual median and symmetric 90% interval convention; if you like mode and narrowest 68% region, it’s

). If we combine with results for GW170817, we get

(or

) [bonus note].

- The merger rate density for a population of GW190814-like systems is

. If you think you know how GW190814 formed, you’ll need to make sure to get a compatible rate estimate.

What can we say about potential formation channels for the source? This is rather tricky as many predictions assume supernova models which lead to a mass group, so there’s nothing with a compatible mass for the lighter component. I expect there will be lots of checking what happens without this assumption.

Given the mass of the black hole, we would expect that it formed from a low metallicity star. That is a star which doesn’t have too many of the elements heavier than hydrogen and helium. Heavier elements lead to stronger stellar winds, meaning that stars are smaller at the end of their lives and it is harder to get a black hole that’s 23 solar masses. The same is true for many of the black holes we’ve seen in gravitational waves.

Massive stars have short lives. The bigger they are, the more quickly they burn up all their nuclear fuel. This has an important implication for the mass of the lighter component: it probably has not grown much since it formed. We could either have the bigger component forming from the initially bigger star (which is the simpler scenario to imagine). In this case, the black hole forms first, and there is no chance for the lighter component to grow after it forms as it’s sitting next to a black hole. It is possible that the lighter component formed first if when its parent star started expanding in middle age (as many of us do) it transferred lots of mass to its companion star. The mass transfer would reverse which of the stars was more massive, and we could then have some accretion back onto the lighter compact object to grow it a bit. However, the massive partner star would only have a short lifetime, and compact objects can only swallow a relatively small rate of material, so you wouldn’t be able the lighter component by much more than 0.1 solar masses, not nearly enough to bridge the gap from what we would consider a typical neutron star. We do need to figure out a way to form compact objects about 2.6 solar masses.

Two possible ways of forming GW190814-like systems through isolated binary evolution. In Channel A the heavier black hole forms first from the initially more massive star. In Channel B, the initially more massive star transfers so much mass to its companion that we get a mass inversion, and the lighter component forms first. In the plot, is the orbital separation,

is the orbital inclination,

is the time since the stars started their life on the main sequence. The letters on the right indicate the evolution phase: ZAMS is zero-age main sequence, MS is main sequence (burning hydrogen), CHeB is core helium burning (once the hydrogen has been used up), and BH and NS mean black hole and neutron star. At low metallicities

(when stars have few elements heavier than hydrogen and helium), the two channels are about as common, as metallicity increases Channel A becomes more common. Figure 6 of Zevin et al. (2020).

The mass ratio is difficult to produce. It’s not what you would expect for dynamically formed binaries in globular clusters (as you’d expect heavier objects to pair up). It could maybe happen in the discs around active galactic nuclei, although there are lots of uncertainties about this, and since this is only a small part of space, I wouldn’t expect a large numbers of events. Isolated binaries (or higher multiples) can form these mass ratios, but they are rare for binaries that go on to merge. Again, it might be difficult to produce enough systems to explain our observation of GW190814. We need to do some more sleuthing to figure out how binaries form.

Epilogue

The LIGO and Virgo gravitational wave detectors embody decades of work by thousand of scientists across the globe. It took many hard years of research to create the technology capable of observing gravitational waves. Many doubted it would ever be possible. Finally, in 2015, we succeeded. The first detection of gravitational waves opened a new field of astronomy—our goal was not to just detect gravitational waves once, but to use them to explore our Universe. Since then we have continued to work improving our detectors and our analyses. More discoveries have come. LIGO and Virgo are revolutionising our understanding of astrophysics, and GW190814 is the latest advancement in our knowledge. It will not be the last. Gravitational wave astronomy thrives thanks to, and as a consequence of, many people working together towards a common goal.

If a few thousand people can work together to imagine, create and operate gravitational wave detectors, think what we could achieve if millions, or billions, or if we all worked together. Let’s get to work.

Title: GW190814: Gravitational waves from the coalescence of a 23 solar mass black hole with a 2.6 solar mass compact object

Journal: Astrophysical Journal Letters; 896(2):L44(20); 2020

arXiv: 2006.12611 [astro.ph-HE]

Science summary: The curious case of GW190814: The coalescence of a stellar-mass black hole and a mystery compact object

Data release: Gravitational Wave Open Science Center; Parameter estimation results

Rating: 🍩🐦🦚🦆❔

Bonus notes

MESA Summer School

Modules for Experiments in Stellar Astrophysics (MESA) is a code for simulating the evolution of stars. It’s pretty neat, and can do all sorts of cool things. The summer school is a chance to be taught how to use it as well as some theory behind the lives of stars. The school is aimed at students (advanced undergrads and postgrads) and postdocs starting out using or developing the code, but there’ll let faculty attend if there’s space. I was lucky enough to get a spot together with my fantastic students Chase, Monica and Kyle. I was extremely impressed by everything. The ratio of demonstrators to students was high, all the sessions were well thought out, and ice cream was plentiful. I would definitely recommend attending if you are interested in stellar evolution, and if you want to build the user base for your scientific code, this is certainly a wonderful model to follow.

Detection significance

For our final (for now) detection significance we only used data from LIGO Livingston and Virgo. Although the Hanford data are good, we wouldn’t have looked at this time without the prompt from the other detectors. We therefore need to be careful not to bias ourselves. For simplicity we’ve stuck with using just the two detectors. Since Hanford would boost the significance, these results should be conservative. GstLAL and PyCBC identified the event with false alarm rates of better than 1 in 100,000 years and 1 in 42,000 years, respectively.

Distance

The luminosity distance of GW190814’s source is estimated as . The luminosity distance is a measure which incorporates the effects of the signal travelling through an expanding Universe, so it’s not quite the same as the actual distance between us and the source. Given the uncertainties on the luminosity distance, it would have taken the signal somewhere between 600 million and 850 million years to reach us. It therefore set out during the Neoproterozoic era here on Earth, which is pretty cool.

In this travel time, the signal would have covered about 6 sextillion kilometres, or to put it in easier to understand units, about 400,000,000,000,000,000,000,000,000 M&Ms laid end-to-end. Eating that many M&Ms would give you about calories. That seems like a lot of energy, but it’s less than

of the energy emitted as gravitational waves for GW190814.

Betting

Given current uncertainties on what the maximum mass of a neutron star should be, it is hard to offer odds for whether of not the smaller component of GW190814’s binary is a black hole or neutron star. Since it does seem higher mass than expected for neutron stars from other observations, a black hole origin does seem more favoured, but as GW190425 showed, we might be missing the full picture about the neutron star population. I wouldn’t be too surprised if our understanding shifted over the next few years. Consequently, I’d stretch to offering odds of one peanut butter M&M to one plain chocolate M&M in favour of black holes over neutron stars.

Hubble constant

Using the Dark Energy Survey galaxy catalogue, Palmese et al. (2020) calculate a Hubble constant of (mode and narrowest 68% region) using GW190814. Adding in GW170814 they get

as a gravitational-wave-only measurement, and including GW170817 and its electromagnetic counterpart gives

.