GW200115 and GW200105 are the first gravitational-wave candidates announced from the second half of LIGO and Virgo’s third observing run (O3b). They may be our first ever observations of neutron star–black hole binaries [bonus note]. These mixed binaries of one neutron star and one black hole have long proved elusive, but we are now on our way to revealing their secrets.

The population of compact objects (black holes and neutron stars) observed with gravitational waves and with electromagnetic astronomy, including a few that are uncertain. The sources for GW200115 (left) and GW200105 (right) are highlighted. Source: Northwestern

The first gravitational-wave signal ever detected, GW150914, came from a binary black hole system: two black holes that inspiralled together to form a bigger black hole. (I hope you are all imagining a bloopy chirp to accompany this). We had never before observed a binary black hole system. However, binary black holes have proved to be the most common source of gravitational waves, and we are now starting to understand their properties. We found our next type of gravitational-wave source with GW170817, which came from a binary neutron star system (two neutron stars that orbited each other). Before we had gravitational-wave astronomy, we knew this type of binary existed as we had observed pulsars in binaries thanks to radio astronomy. Yet, our second binary neutron star observation, GW190425, still showed that we didn’t know everything about their properties. After finding binary black holes and binary neutron stars, what about a mixed neutron star–black hole binary? These should exist, but finding evidence for them has proved difficult.

Time to tick neutron star–black hole binaries off the checklist. Part of a comic by Nutsinee Kijbunchoo drawn following the discovery of GW170817 showing Rai Weiss rather happy with his work. [Update]

Previous candidates

The first hints of neutron star–black hole binaries came in the first half of LIGO and Virgo’s third observing run (O3a, yes we are the best at thinking up names). The gravitational-wave candidate GW190426_152155 (the best at names) looks like it could have come from a neutron star–black hole binary. However, this is a quiet signal, so we are not sure whether it is real or a false alarm.

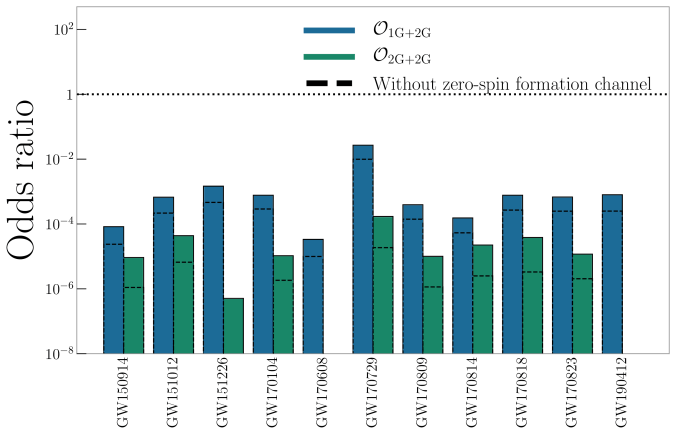

Our detection pipelines search the data from the detectors looking for signals. Our searches designed to specifically look for signals from binaries match the data against templates of what the signals should look like. From this comparison, they consider two pieces of information: how loud a signal is (its signal-to-noise ratio), and how consistent the signal is with the template. These are combined into a ranking statistic, and by comparing the ranking statistic with values produced by a background of noise, we can compute a false alarm rate of how often something at least this signal-like would happen in random noise. For GW190426_152155, this is , which isn’t too great.

The false alarm rate is not the end of the story though: we need to consider the true alarm rate: how often we expect to detect such a signal. If something is an everyday occurrence, you don’t need much evidence to convince yourself it’s real. Consider the quality of a photo you would need to convince yourself there was a horse walking around outside, and the quality needed to convince yourself there is a unicorn. For gravitational waves, a false alarm rate of would be enough to give you a fair (but not necessarily conclusive) probability of the signal being real if the source were a binary black hole, as we know they are pretty common. We don’t yet know how common gravitational waves from neutron star–black hole binaries are, but the fact that we are lacking good examples indicates that they are at least somewhat rare. Therefore, with the balance of probability, it seems plausible that GW190426_152155 is noise, and the hunt needs to continue.

Estimated total mass and mass ratio $q = m_2/m_1 \leq 1$ of the binary sources for the candidates in O3a. The contours mark the 90% credible regions. The dashed lines mark a robust upper limit on the maximum neutron star mass. Figure 6 of the GWTC-2 Paper.

The next potential candidate was GW190814. This is a super clear detection. However, the nature of the source is more mysterious. The primary (the more massive object in the binary) is definitely a black hole, but the secondary, at around (where

is a solar mass) is either potentially too large to be a neutron star. We’re not entirely sure of the maximum mass a neutron star can be before collapsing. Hence, we’re not quite sure if we have a massive neutron star, or a really small black hole. I think the black hole is more likely. The curious nature of GW190814’s source means we are still missing an unambiguous neutron star–black hole.

Discovery

Observations in O3b changed everything. Within the space of ten days in January 2020 [bonus note], we collected two neutron star–black hole candidates: GW200105_162426 (GW200105 for short) and GW200115_042309 (GW200115).

GW200115 is a clear detection. All three detectors were observing at the time, and we get a good signal in both LIGO Livingston and LIGO Hanford (Virgo, being less sensitive currently, has less informative data). From these observations, our search algorithm GstLAL estimates a false alarm rate of , PyCBC estimates

, and MBTA (being used for the first time for final search results) estimates

. All of the search algorithms agree that this is a significant detection.

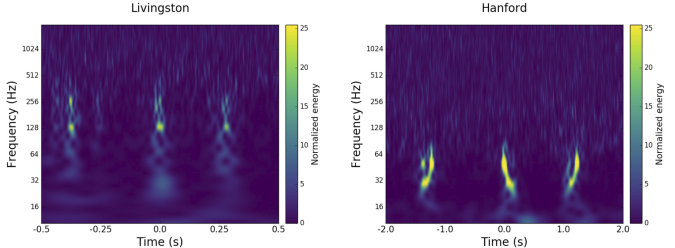

GW200105 is more difficult. LIGO Hanford was offline at the time, so we only have LIGO Livingston and Virgo. In Livingston data we can see a beautiful chirp, but in Virgo the signal is too quiet for the detection algorithms to use. This is like the case for GW190425, we must try to establish the significance using a single detector.

Time–frequency plots for GW200105 (left) and GW200115 (right) as measured by LIGO Hanford, LIGO Livingston and Virgo. LIGO Hanford was not observing at the time of GW200105. The chirp of a binary coalescence is clearest in Livingston for GW200105; these are usually hard to see for these types of signals. The Livingston data for GW200105 is shown after glitch subtraction, and the Livingston data for GW200115 shows light-scattering glitches at low frequencies. Figure 1 of the NSBH Discovery Paper.

When we have multiple detectors, we can ask how often we would expect to see the same signal at compatible times in multiple detectors. It is much less likely that multiple detectors would have the same random bit of noise in one detector and at the same time in another. We can estimate how often this would happen, for example, by comparing data from the detectors at different times. Considering many different time offsets, we can build up statistics for tens of thousands of years, even though we have only been observing for a few months (the upper limit on the false alarm rate quoted for GW200115 is because it stands out after we have exhausted all these times slides). When we have a single detector, we can’t do this.

GW200105 stands out from anything we have seen in the data we’ve analysed. We could therefore assign a false alarm rate of one per observing time. However, that doesn’t quite encode everything we know. We expect louder noise artifacts to be rarer than quieter ones. An outlier with signal-to-noise ratio of 12 should be rarer than one with signal-to-noise ratio of 11 (and GW200105 is over 13), and hence we can use this knowledge to try to extrapolate a false alarm rate.

Detection statistics for GW200105, GW200115 and GW190426_152155, showing they compare to background data. The plot shows the signal-to-noise ratio and signal-consistency statistic

from the GstLAL algorithm. The coloured density plot shows the distribution of background triggers. LHO indicates a trigger from LIGO Hanford, and LLO indicates a trigger from LIGO Livingston. GW200105 is distinct from anything else seen in O3. However, GW200105 is calculated less significant than GW200115 as it only has a trigger from a single detector. Figure 3 of the NSBH Discovery Paper.

Currently, only GstLAL can calculate single-detector false alarm rates. PyCBC and MBTA both identify the same feature in the data, but cannot assign a significance to this. Using GstLAL’s extrapolation, which is chosen to be conservative (not as conservative as one per observing time, but a better representation of the data), we calculate a false alarm rate of . This is good enough to be interesting, and better than for GW190426_152155, but not enough to be absolutely conclusive. I think we may see some active development of estimating single-detector false alarm rates (or lowering the threshold for Virgo data to be used) in the future to try to address these difficulties.

It is very tempting to look at GW200105‘s clear chirp and convince yourself it must be real. However, our detection algorithms are more sensitive than our eyes and more reliable. They are carefully tested, and build up their statistics analysing large chunks of data. Hence, we should acknowledge that the difficulty in assigning a false alarm rate is an intrinsic difficulty when you only have so much data. Even the best signal can only end up with a modest false alarm rate. It’s kind of like winning the lottery on your third go if you don’t know how the lottery works: you can estimate that the probability of winning is about 1/3, even if you suspect it should be much smaller. The results computed for this paper only use a fraction of O3b, so we could be able to do a little better in the future.

Sources

Let us assume both signals are real, where do they come from? Do we at last have our undisputable neutron star–black hole binaries?

We infer [bonus note] that GW200115 comes from a binary with component masses and

(or

and

if we restrict the secondary’s spin to

). The primary here looks to be a black hole. It is one of the smallest we have seen. The uncertainties on the measurement potentially take it into the hypothesised lower mass gap between neutron stars and black holes suggested from X-ray observations (and somewhat questioned by GW190814); however, there is a 70% chance that the mass is

, so it is pretty consistent with the population of black holes we’ve seen in X-ray binaries. The secondary is perfectly in the neutron star range. Hence, this looks like a great neutron star–black hole binary candidate.

For GW200105, we infer that the primary has mass and secondary has mass

(or

and

with low secondary spin). The primary is a nice black hole, the secondary is a nice plump neutron star. It is towards the more massive end of the distribution we have seen with radio observations, but it is consistent with past observations. Unlike for GW190814, we do not have any trouble explaining such as mass given what we know about the stiffness of neutron star stuff™. This is another good neutron star–black hole binary candidate.

Estimated masses for the binary primary and secondary masses and

for neutron star–black hole binary candidates. The two-dimensional plot shows the 90% probability contour. For GW200105 and GW200115 we show results for two different spin priors for the secondary. The one-dimensional plot shows individual masses; the vertical lines mark 90% bounds away from equal mass. Estimates for the maximum neutron star mass (based upon Galactic neutron stars and studies of the equation of state) are shown for comparison with the mass of the secondary. Figure 4 of the NSBH Discovery Paper.

The masses for GW200115 overlap nicely with those inferred for GW190426_152155 and

[bonus note]. The uncertainties for GW190426_152155 are larger, on account of it being quieter. Perhaps this could indicate this is fairly typical for neutron star–black hole binaries (and we might need to revise that true alarm rate)? It’s still too early to say, but I very much look forward to finding out!

The masses align nicely with expectations for neutron star–black hole binaries, so there are no surprises there. Ideally, we would confirm that we have seen neutron stars by measuring the tidal distortion of the neutron star [bonus note]. Unfortunately, these effects get harder to measure when the asymmetry in masses gets more significant, and we can’t pick anything out of the data. However, we did compare the secondary masses to various expectations for the maximum neutron star mass, and find that there’s over a 93% probability that the secondaries are safely below this. In conclusion, I think we have a good case for having completed our set of binaries and found neutron star–black hole binaries.

Estimated orientation and magnitude of the two component spins for GW200105 (left) and GW200115 (right). The distribution for the more massive primary component is on the left, and for the lighter secondary component on the right. The probability is binned into areas which have uniform prior probabilities, so if we had learnt nothing, the plot would be uniform. The maximum spin magnitude of 1 is appropriate for black holes. The solid line shows the 90% credible region using the high spin prior (which is used for the rest of the plot) and the dashed line shows the 90% contour for the low-spin prior. Figure 6 of the NSBH Discovery Paper.

The spins are more interesting. Spins range from zero for non-spinning, to one for a maximally spinning black hole. As a consequence of the large mass asymmetry, we measure the spin of the black holes better than for the neutron stars. For GW200105, we can constrain the spin magnitude to be at 90% probability (or

with the low neutron star spin prior). This matches what we have seen for a lot of our black holes (as for GW190814‘s primary, but probably not for GW190412‘s primary), that their spins are small and nicely consistent with being zero.

For GW200115, the primary spin is also consistent with zero. However, there is also support for larger spins, and intriguingly, spin misaligned (or even antialigned as there’s little evidence of spin components in the orbital plane) with the orbital angular momentum. It is often convenient to work with the effective inspiral spin, which is a mass-weighted combination of the two spins projected along the direction of the orbital momentum. A positive value indicates the spins are overall aligned with the orbital angular momentum, while a negative value indicates the spins are overall misaligned. For GW200105, we find (or

with low neutron star spin). This is consistent with zero, and what you would expect if spins were small, or if there were no preferred alignment. For GW200115 however, we find

(

with low neutron star spin). This is still consistent with positive or zero values, but prefers negative values.

Generally aligned spins are expected for binaries formed from two binary stars that lived their lives together. The stars would have formed from the same cloud of gas, so you would expect the stars to start out rotating the same way. Tides and mass transfer between the stars should also help to align spins. Supernova explosions could tilt the spins, but it’s hard to get a complete reversal without disrupting the binary. This did happen for the double pulsar, so it’s not impossible, but overall you would expect it to be rare. However, for binaries formed dynamically, the spins would be randomly aligned.

Does the spin for GW200115 thus point to a dynamical origin? That would be unexpected, as isolated evolution generally predicts higher rates of forming neutron star–black hole binaries than dynamical channels. Dynamical channels tend to prefer making more massive binaries. The spin is perfectly consistent with being small and aligned, so perhaps that is the correct answer, and there’s nothing unexpected to see.

Estimated primary mass , spin component in the orbital plane

, and spin component aligned with the orbital angular momentum

and for GW200115. The (off-diagonal) two-dimensional plots show the correlations between parameters. The solid lines indicate 50% and 90% credible regions with the high-spin prior for the secondary, and the dashed lines show the same for the low-spin prior. The (on-diagonal) one-dimensional plots show probability densities. The vertical lines indicate 90% credible intervals. The black lines show the priors. Figure 7 of the NSBH Discovery Paper.

Since the spin is correlated with the mass, if we impose that GW200115‘s primary spin is small and aligned, we also find that the primary mass is towards the upper end of its range. This would keep it safely out of the proposed range of the lower mass gap. I’m not sure if that is of any physical relevance (as I’m not sure if I believe there is a gap), but it is potentially worth keeping in mind if you want to model the progenitor (you need to fit mass and spin together).

I look forward to lots of studies looking at how to form these systems.

Rates

Now we have confirmation that neutron star–black hole binaries exist, how many do we think there are out there? To go from our detections to a merger rate density, we need to assume something about the population of neutron star–black hole binaries (we need to know about the systems that we could have observed but didn’t). This is rather tricky, as neutron star–black hole binaries could potentially have a diverse range of properties, and we can’t be sure of this distribution with only a couple of observations. Therefore, we’ve tried a few different things.

First, we considered what are the rates of binaries that match the inferred properties of the two sources. We infer that the rate of GW200115-like binaries is using the results of GstLAL (and

using PyCBC). The rate of GW200105-like binaries is

(since PyCBC couldn’t detect this event, we could only set an upper limit, which is less interesting). GW200115 is less massive than GW200105, and so could not be detected to as great a distance. Therefore, since we’ve detected one of both, it means that the rate of GW200115-like binaries should be a bit higher. If we assume all neutron star–black hole binaries are like one of the two, we find an overall event-based rate of

.

Probability distribution for the neutron star–black hole binary merger rate density. The green curve shows the event-based rate assuming all neutron star–black hole binaries are like GW200105 or GW200115. The black line assumes a broader population that also includes GW190814 and higher mass black holes. The vertical lines mark the 90% credible interval. Figure 9 of the NSBH Discovery Paper.

The second approach is to take a much more agnostic approach, and consider all output from our detection pipelines over a plausible mass range. The population here is defined more for convenience than anything else. We picked search triggers (down to a signal-to-noise ratio) corresponding to binaries with a primary mass between and

and a secondary mass between

and

. The upper limit on the primary mass is set by the limits of our waveforms. Potentially, this mass could catch some binary neutron stars or binary black holes too. Therefore, we consider a mixture model and probabilistically assign candidates to being either noise, binary neutron star (if both components are below

), binary black hole (if both components are above

), and neutron star–black hole binaries (for things in between). I think we’ve been very inclusive in defining the neutron star–black hole space here, both excluding the possibility of binary neutron star components above

(which I think unlikely, but possible), and binary black hole components below

(which I think probable). Therefore, we should absolutely not be missing any neutron star–black holes (GW190814’s source is counted as a neutron star–black hole in this calculation). This rate comes out as

.

I don’t think these will rule out any models, but they give the ballpark to aim for. As we find more neutron star–black hole candidates, these rates should evolve as our uncertainties will shrink, and we get a better understanding of the source population.

Predictions for the neutron star–black hole binary merger rate density as modelled by the COMPAS population synthesis code. The different models illustrate variations in the input physics, highlighting the range of predictions for isolated binary evolution. Other channels could potentially form neutron star–black hole binaries too. Figure 9 of Broekgaarden et al. (2021).

Summary

We have finally found our neutron star–black hole binaries. They’re pretty neat. These are the first discoveries from O3b. They will not be the last.

Title: Observation of gravitational waves from two neutron star–black hole coalescences

Journal: Astrophysical Journal Letters; 915(1):L5(25)

arXiv: 2106.15163 [astro-ph.HE]

Science summary: A new source of gravitational waves: Neutron star–black hole binaries

Data release: GW200105; GW200115

GW200105 Rating: 🐦🍨🥇😮

GW200115 Rating: 🐭🍨😮🙃🏆

Bonus notes

Cookies

I like to think of neutron star–black hole binaries as the mirror counterparts of fluffernutter cookies. Black holes are black and super dense, completely unlike marshmallows. Neutron stars are made of something mysterious that we don’t know the properties of, but we think all neutron stars are made of the same type of stuff™, whereas peanut butter is made of well known ingredients, but has both smooth and crunchy equations of state. Despite the difference in ingredients, for both, when we mix the two types we get something delicious.

Even years

Previously, all our LIGO–Virgo discoveries came during odd-numbered years, so I was kind of hoping for a quiet 2020. This didn’t work out.

Waveform models

One of the most difficult things with inferring the properties of neutron star–black hole binaries is the waveform models that we use. We need accurate models to compare with the data to get good estimates of the parameters. Unfortunately, we don’t have models that include all the physics we want (spin precession, higher-order multipole moments, and the effects of the neutron star stuff™). From our tests, it seems like spin precession and higher-order multipole moments are more important. The latter is certainly important for asymmetric binaries. Therefore, for our main results, we use binary black hole waveforms that include spin precession and higher-order multipole moments (but no neutron star stuff™ effects). These models should be a pretty good representation of the overall physics (especially if the neutron tar gets swallowed whole). However, they may not give the best estimate of the final black hole mass. In the paper, we used the neutron star–black hole waveforms that include neutron star stuff™ effects but not spin precession and higher-order multipole moments, but I think it’s a bit confusing to mix the two results here, so I’ll skip over final masses and spins.

GW190426_152155’s properties

While GW190426_152155 agrees nicely with GW200115‘s masses, its other properties are somewhat different. Its effective inspiral spin is (compared with

), and its distance is

. (compared with

). The sky positions are also not significantly overlapping.

Electromagnetic observations

An electromagnetic counterpart, as was found for GW170817, would confirm the presence of stuff™, and that we didn’t just have two black holes. However, with these mass black holes, we would expect the neutron stars to be pretty much swallowed whole (like me consuming a fluffernutter cookie) with nothing to see [bonus bonus note]. So far nothing has been reported, which is about as surprising as failing to find a needle in a haystack, when there is no needle.

Ejecta

We estimate that the amount of neutron star stuff ejected during the merger is less than . This is very small by astronomical standards, but is still pretty large. It’s around a third of the mass of the Earth, and would correspond to around 1,000,000,000,000,000,000,000 elephants. Sadly, it is not expected that material ejected from neutron stars would directly turn into elephants, and elephants do remain endangered.