Understanding how stars work is a fundamental problem in astrophysics. We can’t open up a star to investigate its inner workings, which makes it difficult to test our models. Over the years, we have developed several ways to sneak a peek into what must be happening inside stars, such as by measuring solar neutrinos, or using asteroseismology to measure how sounds travels through a star. In this paper, we propose a new way to examine the hearts of stars using gravitational waves.

Gravitational waves interact very weakly with stuff. Whereas light gets blocked by material (meaning that we can’t see deeper than a star’s photosphere), gravitational waves will happily travel through pretty much anything. This property means that gravitational waves are hard to detect, but it also means that there’ll happily pass through an entire star. While the material that makes up a star will not affect the passing of a gravitational wave, its gravity will. The mass of a star can lead to gravitational lensing and a slight deflecting, magnification and delaying of a passing gravitational wave. If we can measure this lensing, we can reconstruct the mass of star, and potentially map out its internal structure.

Two types of eclipse: the eclipse of a distant gravitational wave (GW) source by the Sun, and gravitational waves from an accreting millisecond pulsar (MSP) eclipsed by its companion. Either scenario could enable us to see gravitational waves passing through a star. Figure 2 of Marchant et al. (2020).

We proposed looking at gravitational waves for eclipsing sources—where a gravitational wave source is behind a star. As the alignment of the Earth (and our detectors), the star and the source changes, the gravitational wave will travel through different parts of the star, and we will see a different amount of lensing, allowing us to measure the mass of the star at different radii. This sounds neat, but how often will we be lucky enough to see an eclipsing source?

To date, we have only seen gravitational waves from compact binary coalescences (the inspiral and merger of two black holes or neutron stars). These are not a good source for eclipses. The chances that they travel through a star is small (as space is pretty empty) [bonus note]. Furthermore, we might not even be able to work out that this happened. The signal is relatively short, so we can’t compare the signal before and during an eclipse. Another type of gravitational wave signal would be much better: a continuous gravitational wave signal.

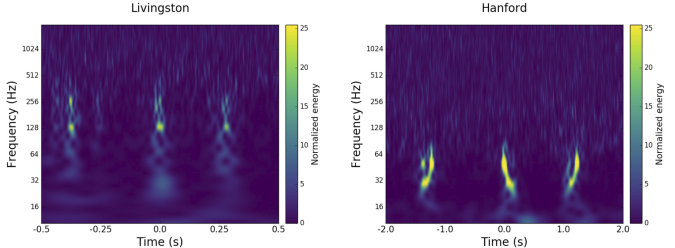

Probability of observing at least one eclipsing source amongst a number of observed sources. Compact binary coalescences (CBCs, shown in purple) are the most rare, continuous gravitational waves (CGWs) eclipsed by the Sun (red) or by a companion (red) are more common. Here we assume companions are stars about a tenth the mass of the neutron star. The number of neutron stars with binary companions is estimated using the COSMIC population synthesis code. Results are shown for eclipses where the gravitational waves get within distance of the centre of the star. Figure 1 of Marchant et al. (2020).

Continuous gravitational waves are produced by rotating neutron stars. They are pretty much perfect for searching for eclipses. As you might guess from their name, continuous gravitational waves are always there. They happily hum away, sticking to pretty much the same note (they’d get pretty annoying to listen to). Therefore, we can measure them before, during and after an eclipse, and identify any changes due to the gravitational lensing. Furthermore, we’d expect that many neutron stars would be in close binaries, and therefore would be eclipsed by their partner. This would happen each time they orbit, potentially giving us lots of juicy information on these stars. All we need to do is measure the continuous gravitational wave…

The effect of the gravitational lensing by a star is small. We performed detailed calculations for our Sun (using MESA), and found that for the effects to be measurable you would need an extremely loud signal. A signal-to-noise ratio would need to be hundreds during the eclipse for measurement precision to be good enough to notice the imprint of lensing. To map out how things changed as the eclipse progressed, you’d need signal-to-noise ratios many times higher than this. As an eclipse by the Sun is only a small fraction of the time, we’re going to need some really loud signals (at least signal-to-noise ratios of 2500) to see these effects. We will need the next generation of gravitational wave detectors.

We are currently thinking about the next generation of gravitational wave detectors [bonus note]. The leading ideas are successors to LIGO and Virgo: detectors which cover a large range of frequencies to detect many different types of source. These will be expensive (billions of dollars, euros or pounds), and need international collaboration to finance. However, I also like the idea of smaller detectors designed to do one thing really well. Potentially these could be financed by a single national lab. I think eclipsing continuous waves are the perfect source for this—instead of needing a detector sensitive over a wide frequency range, we just need to be sensitive over a really narrow range. We will be able to detect continuous waves before we are able to see the impact of eclipses. Therefore, we’ll know exactly what frequency to tune for. We’ll also know exactly when we need to observe. I think it would be really awesome to have a tunable narrowband detector, which could measure the eclipse of one source, and then be tuned for the next one, and the next. By combining many observations, we could really build up a detailed picture of the Sun. I think this would be an exciting experiment—instrumentalists, put your thinking hats on!

Let’s reach for(the centres of) the stars.

arXiv: 1912.04268 [astro-ph.SR]

Journal: Physical Review D; 101(2):024039(15); 2020

Data release: Eclipses of continuous gravitational waves as a probe of stellar structure

CIERA story: Using gravitational waves to see inside stars

Why does the sun really shine? The Sun is a miasma of incandescent plasma

Bonus notes

Silver lining

Since signals from compact binary coalescences are so unlikely to be eclipsed by a star, we don’t have to worry that our measurements of the source property are being messed up by this type of gravitational lensing distorting the signal. Which is nice.

Prospects with LISA

If you were wondering if we could see these types of eclipses with the space-based gravitational wave observatory LISA, the answer is sadly no. LISA observes lower frequency gravitational waves. Lower frequency means longer wavelength, so long in fact that the wavelength is larger than the size of the Sun! Since the size of the Sun is so small compared to the gravitational wave, it doesn’t leave a same imprint: the wave effectively skips over the gravitational potential.